As businesses continue to embrace Kubernetes for its scalability and agility, cost management becomes increasingly critical. We understand that managing cloud costs can be complex, especially in large and dynamic containerized environments. That’s why we’re here to provide you with practical insights and actionable tips to take control of your Kubernetes spending. In our previous blog on Kubernetes Cost Optimization Best Practices – Part 1, we covered the common cost optimization challenges and a few solutions. Let’s see a few more,

Taints & Tolerances

In Kubernetes, “taints” and “tolerations” are mechanisms that can be used strategically to optimize costs by efficiently managing the allocation of workloads to nodes within your cluster. They act as filters in the Kubernetes world. Some of its uses in optimization are,

Efficient Utilization:

Nodes can be ‘tainted’ based on their utilization or capacity, such as CPU or memory usage. This allows you to mark nodes that are underutilized or overutilized. Whereas pods that are designed to scale horizontally can have ‘tolerations’ for nodes with low utilization. When additional resources are needed, more pods can be scheduled on those nodes to efficiently utilize available resources without provisioning new nodes.

Cost Optimization:

You can create custom ‘taints’ to represent various cost-related attributes, such as GPU availability, network bandwidth, or specific hardware types. Also pods can be configured with ‘tolerations’ that align with your custom cost-based policies. This ensures that workloads are deployed on nodes that match their requirements while considering cost factors.

Example:

Let’s say, your web application includes a background processing component that handles non-critical tasks like generating reports or processing non-urgent user requests. These tasks can tolerate occasional interruptions or delays without impacting the overall user experience.

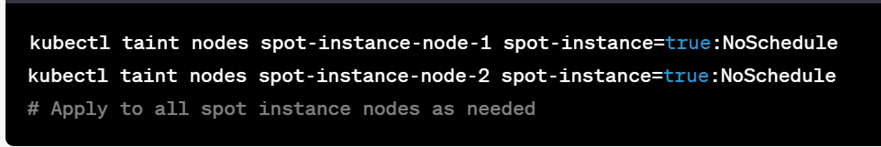

For this case, Taint the nodes that are running on spot instances to indicate their cost-effectiveness but potential for interruptions.

Result: This taint marks these nodes as suitable for running cost-sensitive workloads.

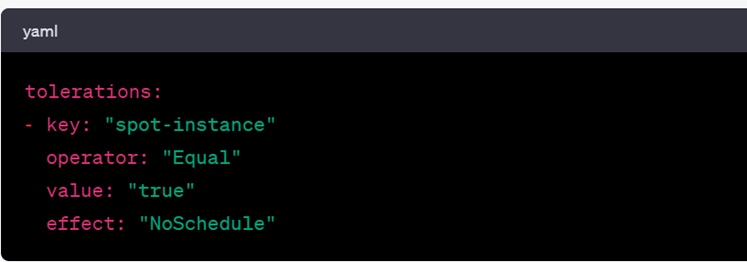

To schedule less critical pods on spot instance nodes, define tolerations as below,

Result: These tolerations allow the pods to be scheduled on nodes with the “spot-instance” taint.

If a spot instance is reclaimed by the cloud provider, Kubernetes will automatically reschedule these pods on other available nodes, maintaining the desired level of service while optimizing costs.

Sleep Mode

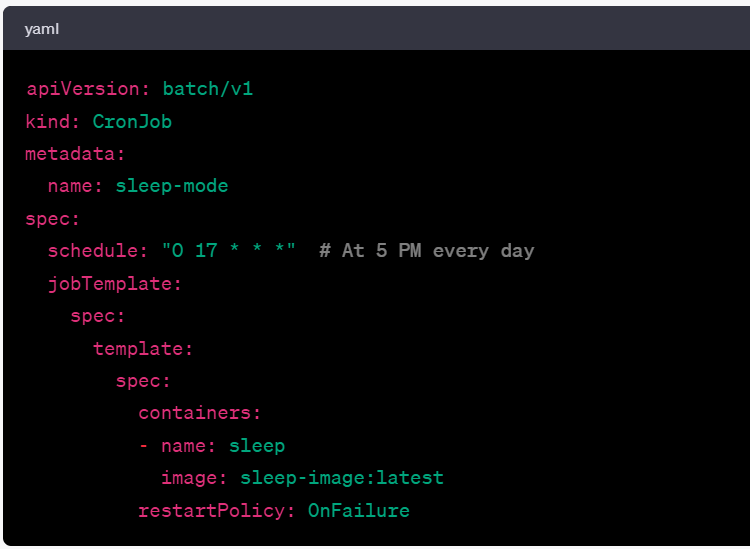

You don’t need all workloads or resources to run 24/7. Especially during non-business hours and weekends. CPU, memory, or storage resources rack up the bills when they are left idle. Putting them into sleep mode with Cronjobs during non-business hours or HPA efficiently scales down the environment when not in use and scales up automatically when needed.

Example: If you want to put your Dev environment to ‘sleep mode’, here’s the code to scale them down at 5.00 P.M. everyday.

Node Pools

Node pools involves grouping nodes with similar characteristics like CPU, memory, etc. This helps in efficient resource management which in turn drives Kubernetes cost optimization. Different cloud providers offer a wide range of VM instance types optimized for various use cases. When you explore the offerings of your chosen cloud provider GCP, AWS, Azure, etc. you can identify instance types that align with your workload requirements. Pay attention to factors like CPU, memory, storage, network performance, and cost.

Kubernetes Cost Monitoring Tools

At Amadis as we always insist, spending is not a bottleneck as long as you SPEND RIGHT on your cloud resources. Spending right starts from analyzing your Kubernetes environment which is possible with Kubernetes cost monitoring tools.

CloudCADI simplifies your complex Kubernetes cost optimization process in a few clicks. Some of its key features are,

Pod-level showback: From your native cloud providers’ bills, you can get the charges of the clusters overall. Whereas in CloudCADI, you have the flexibility to drill down the charges at a lowest possible unit level – pod.

Node resizing: CloudCADI never stops with the Kubernetes cost and utilization overview. Furthermore, it gives intelligent recommendations on how to alter your resources with choices and savings opportunities. Cloud administrators can simply leverage these options rather than manually evaluating the options.

By implementing these cost optimization tricks and regularly reviewing your Kubernetes environment for inefficiencies, you can effectively manage your infrastructure costs while ensuring optimal performance and resource utilization.

SPEND RIGHT on cloud with CloudCADI today.